PureData Fundamentals

Logging my adventures into PureData with a brief intro

Published on

I look back at the last few years of my life in programming, and I find myself questioning what I found to be the most fun part of it all: was it conquering hard problems? Learning new languages? Making something useful that I might use every day? Or learning about a new field that I find myself infatuated with?

It's hard for me to answer those questions. I like learning new languages, but learning new languages isn't always a direct correlation between understanding and creating. I know Haskell, but I also can't say I've made anything good with it, nor can I say I fully know Haskell. Haskell might be the end-all be-all language of languages, but it's an incredibly difficult hill to climb.

Problem solving is another field of discovering a question and asking yourself how you can solve it correctly and quickly. Correctness is not always equivalent to quickness. There's a strong line between solvability and provability, and that in itself is one of computer science's largest barriers.

Usefulness of my creations is another thing; I don't create a lot of things that I would often find myself using. It's one thing to create some CLI todo app that might help you get your life in order, but it's another thing to create something that has a layer of consistency that keeps you using something day-in day-out.

Lastly, I think the one thing I like doing the most, is audio programming. Audio programming is not like many other things. Creation with audio is a category of self-satisfaction like none other I really know. Making audio is such a joy and fun thing to chase.

Oscillation

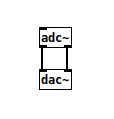

The first step on the PureData journey is understanding how to create audio. Audio is a physical product of pushing air around at varying strengths. It has no discreteness to it in the physical world, it is purely natural. A computer, however, needs digital information, and for that we require a discrete translation of physical information to digital. To convert audio from digital to physical, we require something called a DAC, or (digital-to-audio converter). The reverse process, is an ADC (audio-to-digital converter).

The ADC is effectively a gateway for the computer to observe it's immediate physical surroundings through some peripheral. The DAC is a way for the computer to speak out. By simply plugging an ADC into a DAC, you can hear the transformative loss of information in audio quality.

The loss in information you hear is the quantization process of the computer sampling an analogue signal and transforming it into a discrete, quantized array of integers. There are a number of reasons why the signal is not as entirely clear as you hear it in the real world.

By plugging an ADC into a DAC function, it's effectively the same as the id(x) function of programming - whatever you feed into it, is the output. Plus or minus some quality differences. Maybe the output is too loud, and you want to reduce it by 50% so you don't blow out your eardrums. We can do that too.

![Here the ADC's output is multiplied by a factor of 0.5 in order to cut the amplitude's maximum range from [-1.0, 1.0] to [-0.5, 0.5]. Here the ADC's output is multiplied by a factor of 0.5 in order to cut the amplitude's maximum range from [-1.0, 1.0] to [-0.5, 0.5].](https://ste5e.site/blog/pd-fundamentals/adc50.png)

Here the ADC's output is multiplied by a factor of 0.5 in order to cut the amplitude's maximum range from [-1.0, 1.0] to [-0.5, 0.5].

Signal math is the most important principle in PureData. Before we can only do math on primitives like integers or Booleans, but now we can do it on signals. A signal is to be considered a moving piece of data; it's primitive in similar veigns to regular numeral math. You can add signals, multiply signals by other signals or flat scalar values, divide signals, and subtract. Each operation lends itself to a style of audio synthesis. Like the following:

Additive and subtractive are exactly as they sound, and Frequency Modulation synthesis is the basis of broadcast radio. I'll talk more about FM synthesis a little later.

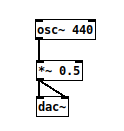

Let's cut out the adc~ for now. We don't really want it. We're going to start with generalized synthesis, which will require the use of oscillators. An oscillator is essentially a moving clock that will constantly pump out a stream of changing numbers. The basic oscillator is the osc~ node that pumps out a pure Sine wave. A Sine wave is special because it produces no overtones, and sounds very soft.

Here is an oscillator pushing out what is considered the concert 'A' note, at a frequency of 440 Hertz per second.

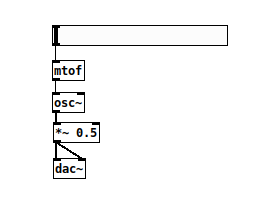

We've completed what is effectively a digitalized synthesizer, but it's not very useful. To edit the frequency of the oscillator, we need to supply a number at which we can control the speed. We can combine two aspects: a visual slider to control it, and a facility to convert a number into a frequency that sounds musically meaningful. There's about 22,000 different frequency speeds, but music theory only revolves around a handful of those. The mtof function converts a MIDI value to a corresponding frequency value. MIDI is an electronic device communication standard so electronic devices can communicate with software in a common protocol.

Assuming eighty-eight keys on a piano, then MIDI covers a range of 127 values to map to different frequencies. By adding a horizontal slider called hslider, we get a visual element in our PureData workspace that we can click and drag to different positions, corresponding to an integer ranger that we can hook into our nodes.

At this point we have a successful synthesizer. It takes an integer value and produces a corresponding frequency, multiplied by 0.5 for our ears.

Modulation

I closed that last section off by saying "integer value" to produce the sound output. Isn't that weird? It's because you can go beyond raw numbers and introduce variation into the system by simply allowing other signals to control certain parameters. This would be called a control voltage, and you can attach CVs to mostly any parameter you can think of.

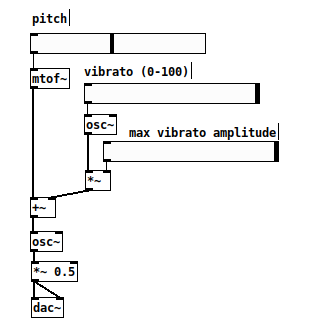

First, let's start with something called vibrato. Vibrato is the concept that when a person sings, their larynx can vibrate to produce an alternation in pitch ever so slightly. In electronics, we can reproduce this with another oscillator to modify the original frequency control value by a small tiny amount.

By modulating the input pitch, and adjusting the frequency of the vibrato and the vibrato's oscillator's amplitude, you can make some truly alien sounds with this alone. We convert mtof to mtof~ to produce a signal instead of a flat value (note the usage of the tilde key here to denote the output is a signal). Then by producing a secondary signal on the side for vibrato, we can add that together with basic arithmetic operators to create a new frequency, one whose pitch is modified slightly by a zero to 100 hertz signal.

Using control signals is pretty common in a lot of places, it adds to variation in sound design greatly. You can control filters, synthesizers, other effects using these kinds of signals. But what if we tried combining two signals into one? There's a few ways about this if we were to revisit the categories of synthesis.

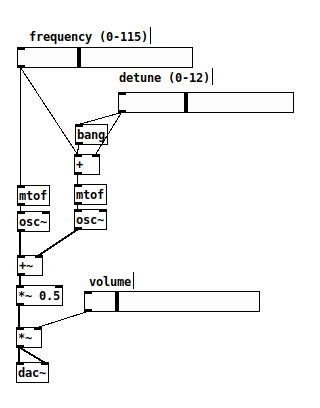

Here we start with a frequency slider and pass it into two different pipelines. The right-hand side will subtract a certain amount based on the amount of detune we want, then add that to the original value. We use an additional bang node here because the different inputs to the plus operation act different based on which side is getting input.

Additive synthesis is the idea of combining two (or more) signals into one final waveform output. However, adding two of the same signals basically does nothing, we need to incorporate variaton similar to vibrato. For this, we de-tune the other signal by a certain amount to deviate it from the original frequency. In this case, it's detuned by a range of 0 to 12 different notes. By going down exactly 12 notes, we reach a higher octave, which wouldn't really do much for our output because it would resonate at exactly one octave higher on the frequency domain.

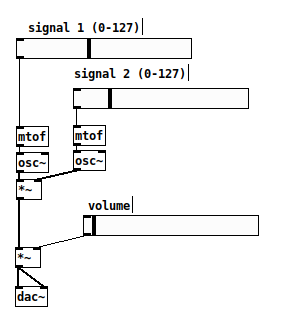

Subtractive synthesis works the same way, but what if we focus on multiplying two signals by one another? If both signals are in the range of [-1, 1], then multiplying two signals together would mean that their final product is based on the overall difference in their frequencies. Meaning we can multiply two different frequency signals together to get a new one, where one signal acts as the "carrier" to carry the other signal to the final output. This forms the basis for radio signals, where one signal acting at a certain speed can carry a waveform with it to be decoded elsewhere.

It looks different than additive synthesis, but not by much. Instead we simply multiply our two signals by one another and we get some interesting sounds. You can add amplitude adjusters for both signals to experiment with lowering their maximum amplitude to see what the end product looks like.

FM synthesis, in this crude approach, ends up sounding pretty darn similar to our vibrato synthesizer, and even a little bit like the additive synthesizer. FM synthesis, in this regard, is actually very potent, if you can account for all the parameters in controlling the different aspects.

For the rest of this post, I will focus on expanding on the FM synthesizer for demonstration purposes.

Interacting

We have a rudimentary understanding of basic synthesis, but how about we add a way to control it from the outside world? Let me introduce the Novation LaunchControl.

An older MIDI device produced by Novation, it has 16 knobs, 8 buttons and some other buttons to control software. In PureData... It does nothing, until you create it.

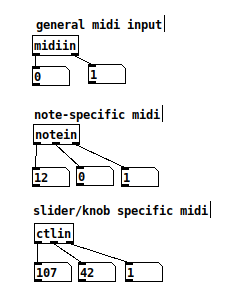

First, I should mention we'll see some new nodes in here, all related to MIDI input. PureData has the capability to send and receive MIDI events over USB (and network, if you want to get fancy), so we plug in the LaunchControl and attach some listeners to it to see what the output values are.

These nodes will help us to debug our input and output when creating MIDI applications. MIDI signals usually come with a note value and a velocity value. 0 and 127 for buttons, and 0 to 127 to indicate a knob.

Unfortunately, detangling MIDI messages is not as fun as one might think. If we have one channel to receive all MIDI messages from, then sending them to the correct place will be ever so annoying with a lot of wires everywhere. We'll have to rely on send and receive functions to send data, along with the ability to route based on note/knob type.

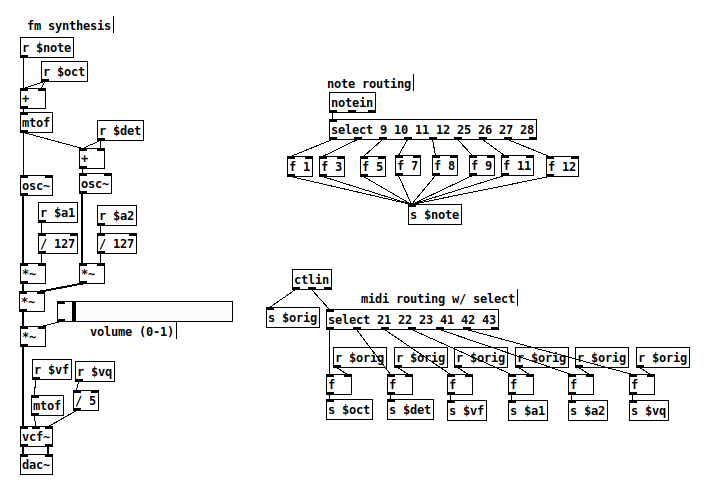

For this next and last example I'll be sharing, I want to introduce new elements to the synthesizer. I'll explain them as best I can.

sendandreceiveare two nodes that can, precisely, do as they say. They send data from one node and the receiver gets the value back. This needs matching identifiers, and can be abbreviated withsandr- use

ctlinandnoteinto receive inputs from a MIDI device - use

selectto route messages that match a list of values and route the outputs correctly. This will be used to map notes from the device into new ones, as well as route messages fromctlinto send them to the appropriate locations - use a

vcf~to filter a signal by a certain frequency with a resonance variable

Using send and receive will greatly clean up our code to prevent lines from being dragged all over the place, especially when it comes to routing MIDI inputs. Throwing important values into send nodes creates a publisher/subscriber model between sections of the application which is pretty important for linking different components together.

My final product looks like this.

Here we have a simple FM synthesizer which accepts notes from a MIDI device as well as control knobs. We parameterized frequency, detune, amplitude, filter and filter resonance.

Conclusion

There are a lot more ideas I want to finalize before the month is over. You might have seen a sneak preview of my Novation Launchpad, an old MIDI device that's an 8x8 grid of MIDI buttons with color LEDs. I've written a lot of code for it in the past and I hope to dust off a lot of components for it in the near future.

Visual applications are a little hard to create applications with, I will admit, and I think there's a lot of things to solve. MIDI devices have their own requirements, and the Launchpad has certain limitations that I am keenly aware of. I'll talk more about those at a later point when I think I have something really cool ready.

Hopefully this serves as a good jumping-off point for someone to be interested in audio programming. Thanks for reading!